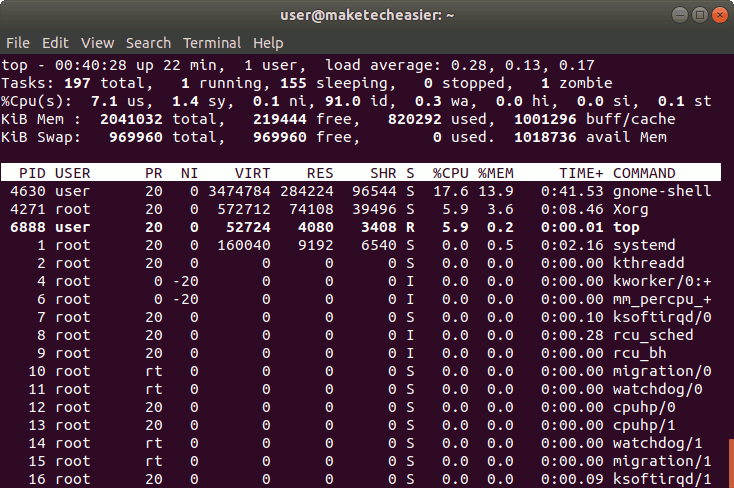

Simply put in this way: if kernel successfully preloads data that you later use, it's a improvement of overall performance if it fails because data it's not needed, then the performance it's not affected. But this is not a problem as cache loading always runs under the hood and with lower priority. Once we run the app, memory use in our Linux distro grows and so does our WSL 2 VM’s memory in Windows. This means that as soon as one of your application requires more memory, kernel will free some of the cached memory: freed memory will be the one that kernel loaded failing to foresee its need.

In fact you can see that despite you have 1370Gb of memory allocated to cache, you also have 140Gb of available memory. Note that cached memory is always available for applications. OS kernel in fact tries to foresee what data you will need from mass storage and will transfer it to RAM before you ask it, so that subsequent loads will be faster as the data is already mapped into memory.

The other requirements are pretty low, so if you can get another memory module. Whatever the RAM size you have, it is quite normal to have all the memory not allocated to program/kernel to be allocated for caching. Grab your free PDF file with all the commands you need to know on Linux. Modern operating systems do extensive use of cached memory, especially to speed up high latency operations like storage I/O.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed